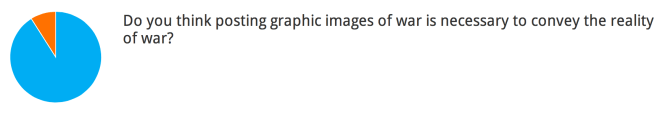

Last week we ran an Urtak poll of Dish readers regarding the thread and the questions it raises. Below are the results from the roughly 1,000 readers who responded (blue means yes, orange means no):

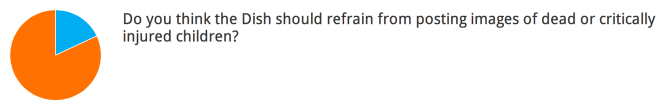

But that support dropped a little when it came to graphic images of kids:

And although readers overwhelmingly support the idea of posting graphic images, they seemed to be sensitive to the impact those images have on the small minority of readers who oppose it:

Understandably the aversion to seeing graphic images of kids was higher among readers who have children (22% aversion) compared to those who do not (14% aversion). Review all of the poll results here. Another reader continues the thread:

I am a little surprised that most of your readers either object or insist on war photography by listing similar reasons – confronting the reality of a war that we take part in but don’t generally have to look at. It’s surprising to me that no one is really questioning the idea that the photography is in some way allowing us to access this reality. It’s not. It’s a picture. One of the common complaints in theories about photography is that looking at these images actually desensitize the viewer by making them complicit with the act of photography, which is by its nature an act of non-intervention.

It makes the documenting of the event seem to be more important in some contexts than the event itself. Think of photographs of starving children, where the photographer presumably could feed the child but takes a picture instead, arguing that if the world sees the starving child that more children could be saved than the one day of extra life this photographer could provide by feeding. But it also turns the act of witnessing the photography into a feeling that you have done something important by confronting something horrible – when in fact the viewer hasn’t done anything at all, hasn’t even confronted something horrible, has just looked at a picture.

And then there is the argument that says basically the shock of seeing carnage like this wears off, and any sadness or horror the viewer feels on being confronted with the image takes the place of any action or more critical thought that might be engendered by another way of presenting the facts of war. I can’t for the life of me remember where I read it, but I think it’s true that the magazines that first published starving children photos in the ’80s sparked a lot of donations – but not really many after that. The photograph insists on the grinding reality of what it portrays, which suggests that these horrors always exist in the world with or without our participation while also only asking of its viewers that they look at them. All you have to do is view them and you have done your part about recognizing the horrors of war. Now, pat yourself on the back and be sure you look at tomorrow’s pictures from some other bloody conflict.

I don’t really have an opinion about whether or not you post pictures. They make me sad but they don’t give me nightmares. And reading about the conflicts also make me sad. But this isn’t a simple question of whether or not your readers have a moral obligation to see this stuff. It’s a lot more complicated than that, and being aware of those complications can help your viewers steer clear of those traps.

year-round,at art fairs, auctions, biennials, and big exhibitions, as well as online via JPEG files and even via collector apps. Gallery shows are now just another cog in the global wheel. Many dealers admit that some of their collectors never set foot in their actual physical spaces.

year-round,at art fairs, auctions, biennials, and big exhibitions, as well as online via JPEG files and even via collector apps. Gallery shows are now just another cog in the global wheel. Many dealers admit that some of their collectors never set foot in their actual physical spaces.