Yesterday, Ezra Klein applauded the Senate for reforming the filibuster:

With gun control dead, immigration reform on life support and bitter disagreement between the House and Senate proving the norm, it looked like the 113th Congress would be notably inconsequential. Today, it became notably consequential. It has changed how all congresses to come will work. Indeed, this might prove to be one of the most significant congresses in modern times. Today, the political system changed its rules to work more smoothly in an age of sharply polarized parties. If American politics is to avoid collapsing into complete dysfunction in the years to come, more changes like this one will likely be needed.

Fallows’s related thoughts:

Whichever party controls the government, has to be able to govern. Our checks-and-balances system, crafted in the demographic and political realities of 18th-century, 13-state, slave/free, Eastern Seaboard America and in many ways showing its age, did not ever contemplate a permanent blocking minority in the Senate as one of the regular “checks.” You can look it up. Minority protection is an important part of our overall Constitutional balance, but not in proceedings of the Senate. Outside a named class of special circumstances — impeachment, treaties, veto-overrides, etc — the Senate, whose all-states-equal formula already over-represents regional minorities, was intended to run as a majority-rule operation.

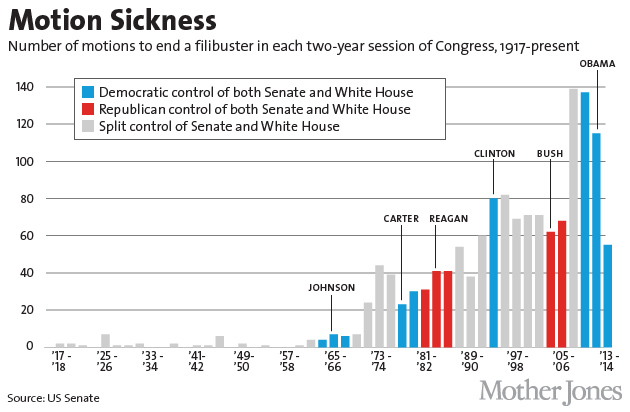

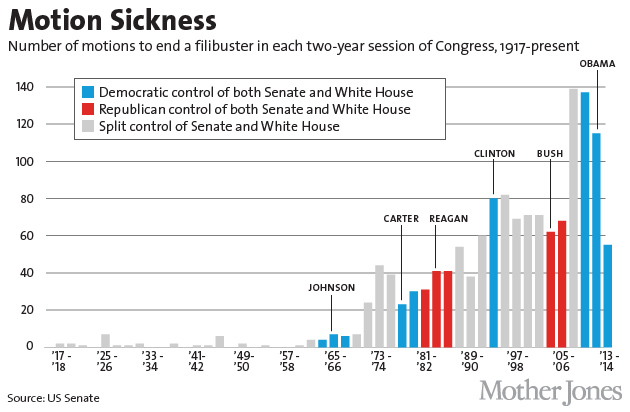

Drum passes along the chart above:

As you can see, their use rose steadily through the 80s and then leveled off starting around 1990. Democrats mainly kept things pretty stable throughout the Bush administration, increasing the number of filibusters only in his last two years when a change of power seemed imminent. When Obama took over, however, there was no honeymoon, not even for a minute. Republicans went into full-bore filibuster mode the day he took office, and they’ve kept it up ever since. For all practical purposes, anything more controversial than renaming a post office has required 60 votes during the entire Obama presidency.

Binder considers what will change:

The mostly likely effect will be felt when the president’s party controls the Senate. Before today’s change, presidents (typically, although not always) chose nominees with an eye to whether the nominees could secure 60 votes for cloture. With only a majority required to bring the Senate to a confirmation vote, there will be little incentive for presidents to consult. I think the biggest potential effect will be visible with appointments to the federal bench. Delays in selecting nominees in theory should go down, to the extent that these delays have been caused by an administration’s inability to secure the advance consent of home state senators. Home state senators from the president’s party will no doubt still wield influence (their votes are still needed), but the rule change today shifts important leverage to the president in selecting nominees for lifetime appointments to the bench. That said, when the opposition party controls the Senate, presidents’ leeway will be trimmed: A Senate majority of the opposite party must still approve the president’s choices.

Dickerson notes other effects:

Whoever is ultimately at fault for the rule change—the Democrats who forced it or the Republicans who blocked the nominations requiring the new rules—the result is that the minority will have less power. That means elections will matter even more than they did before. Every Republican campaign now has more incentive to fight harder to win the six seats needed to take back the Senate in the 2014 election. Presidential elections now mean more, too. Reid’s move will secure Obama’s legacy because the new nominees to the appeals court will be in a position to protect his achievements. Moderate senators will hold more power. Democratic Sens. Mark Pryor and Joe Manchin voted against the rule change. In the future, in a closely divided Senate, they are the kind of senators who will be the key vote to give or deny the majority their nominee.

Hertzberg hopes that the Senate will now do away with the filibuster altogether:

That would make government, and government policymaking, more coherent—and, more important, more accountable. Action, not gridlock, would be the norm. Over time, I believe, the new normal would be to the advantage of those among us who want our government to do its part to solve the nation’s problems and advance the well-being of the nation’s people—not those who want our government to fail, or simply to disappear. And to the advantage of those who want our government to be truly democratic, with a small “d”—even if that sometimes means with a capital “R.”

Steinglass also wants to kill the filibuster:

Democrats are still in control of the Senate for the next 14 months, at least. To use those 14 months to best advantage, they should have gotten rid of the filibuster entirely yesterday. Had they gotten rid of the filibuster in 2009, Obamacare would be a less dangerously kludgey law; they might have gotten a cap-and-trade energy law to fight climate change, immigration reform, a jobs bill, a budget compromise instead of the sequester. The filibuster has done Democrats a world of harm over the past five years, and by the time they lose the Senate, they won’t have it anyway. Apart from its partisan disadvantage to Democrats, it’s a horrible institution with no moral or practical legitimacy, “an anti-democratic monster” as Emily Bazelon puts it. There’s no reason to let it die a slow death. Democrats should finish the job. They could start on Monday.

Koger doubts that the filibuster will be abolished completely in the short term:

Since the Republicans have a majority in the House, the Democrats do not gain a lot by eliminating the filibuster altogether. And the House illustrates the pitfalls of majority party rule: limited debate, heavily censored amendments, and agenda-setting power centralized in the majority party leadership.

Adler argues that filibustering a SCOTUS nominee is now out of the question:

According to Reid, the rules change only applies to executive branch and lower court judicial nominations, and does not apply to Supreme Court nominations. That idea is a joke, as Levin’s comments imply While there are colorable arguments as to why the Senate might want to have different rules for executive branch and judicial nominations, the line drawn by Senator Reid will not stand. As several Republicans threatened before the vote, if and when there is a Republican majority in the Senate again, there will be no filibuster of a President’s Supreme Court nominees.

Philip Klein thinks the change will be good for Republicans:

Though the rule’s changes will allow Obama to install a lot of liberal judges and executive officials in the near term, in the long run, ending the judicial filibuster will benefit conservatives. The reason is that liberals are simply much better at demonizing conservative judicial appointees, even those with sterling credentials. In many cases, this has prompted Republican presidents to choose “stealth” nominees who end up taking an expansive view of the Constitution once they’re on the bench. Scrapping the 60-vote threshold will make it easier for a future conservative president to choose judges who believe that the Constitution granted limited powers to the federal government.

Kilgore has his eyes open:

I have to say I prefer bad government to dysfunctional government. Perhaps without the fallback measure of the filibuster, the shape of the Supreme Court and of constitutional protections can become an open instead of a submerged issue in Senate and presidential elections. And if the nuclear option is eventually extended to legislative filibusters, perhaps we’ll obtain more coherent policies, and more accountable government, regardless of who wins elections.

Daniel McCarthy worries about stability:

In theory you might now get more intensely partisan nominees and greater polarization of the federal bench, as well as cabinets that are even less aware of the need to build more-than-majority-support for policies that affect everyone. American government has generally not been a thing of bare majorities, which tend to be unstable, and the political problems of Obamacare—quite apart from its policy and technological ones—are a product of a short-lived Democratic majority trying to make a great change without securing even grudging support from the other side.

The Economist looks at why this change happened now:

[B]ecause so many appointees have been blocked there are lots of positions to fill. The payoff from changing the rules now is therefore larger than it would normally be. … The success rate for nominees under the second George Bush was 91%. Under Barack Obama it has fallen to 76%. Those numbers probably give a flattering sense of how the process works because they do not reflect the amount of time nominees have had to wait before confirmation: a judge blocked for two years who eventually gets approved goes into the success column.

Emily Bazelon’s bottom line:

The fight for bipartisan normalcy has already been lost. The majority leader merely sounded the death knell. There will be lots of loud lamenting at the wake that follows. Don’t be fooled. If the Republicans were in the Democrats’ position, they’d have done the same thing months ago. Now Millett, Wilkins, and Pillard can take their seats on the bench. And soon the funeral speeches will end, and the next phase of life in the Senate will begin.

dream I ever had in my teens was situated solidly in the Doctor’s world. I was never the Doctor – always his assistant – in these dreams. I knew my place and was just glad to be around him. The dreams invariably took the shape of a classic Doctor Who scenario – walking gingerly down strange, dark corridors waiting for some scary monster to jump out and grab me. Of particular note were the Cybermen, who for me exceeded the Daleks in their inhuman, soulless scariness. The explanation for this, I realized later, was that in the episodes in my youth, the Daleks couldn’t get up the stairs to my bedroom at night. They were on wheels! The Cybermen? They could knock down the front door and march up the stairs in an instant.

dream I ever had in my teens was situated solidly in the Doctor’s world. I was never the Doctor – always his assistant – in these dreams. I knew my place and was just glad to be around him. The dreams invariably took the shape of a classic Doctor Who scenario – walking gingerly down strange, dark corridors waiting for some scary monster to jump out and grab me. Of particular note were the Cybermen, who for me exceeded the Daleks in their inhuman, soulless scariness. The explanation for this, I realized later, was that in the episodes in my youth, the Daleks couldn’t get up the stairs to my bedroom at night. They were on wheels! The Cybermen? They could knock down the front door and march up the stairs in an instant.