Ed Finn argues that “when we start depending on our computers to explain how and why things happened, we’ve started to outsource not just the talking points but the narrative itself”:

The idea that a computer might know you better than you know yourself may sound preposterous, but take stock of your life for a moment. How many years of credit card transactions, emails, Facebook likes, and digital photographs are sitting on some company’s servers right now, feeding algorithms about your preferences and habits? What would your first move be if you were in a new city and lost your smartphone? I think mine would be to borrow someone else’s smartphone and then get Google to help me rewire the missing circuits of my digital self. …

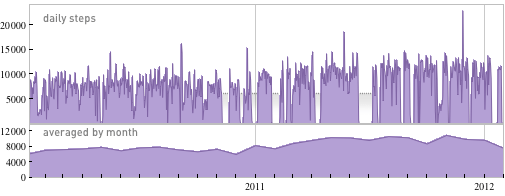

But of course we’re not surrendering our iPhones or our cloud-based storage anytime soon, and many have begun to embrace the notion of the algorithmically examined life. Lifelogging pioneers have been it at it for decades, recording and curating countless aspects of their own daily existences and then mining that data for new insights, often quite beautifully. Stephen Wolfram crunched years of data on his work habits to establish a sense of his professional rhythms far more detailed (and, in some cases, mysterious) than a human reading of his calendar or email account could offer. His reflections on the process are instructive:

He argues that lifelogging is “an adjunct to my personal memory, but also to be able to do automatic computational history—explaining how and why things happened.” We may not always be ready to hear what those things are. At least one Facebook user was served an ad encouraging him to come out as gay—a secret he never shared on the service and had divulged to only one friend. As our digital selves become more nuanced and complete, reconciling them with the “real” self will become harder. Researchers can already correlate particular tendencies in Internet browsing history with symptoms of depression—how long before a computer (or a school administrator, boss, or parent prompted by the machine) is the first to inform someone they may be depressed?

On a related note, Nick Diakopoulos urges reporters to focus more on algorithms:

He wants reporters to learn how to report on algorithms — to investigate them, to critique them — whether by interacting with the technology itself or by talking to the people who design them. Ultimately, writes Diakopoulos in his new white paper,“Algorithmic Accountability Reporting: On the Investigation of Black Boxes,” he wants algorithms to become a beat:

We’re living in a world now where algorithms adjudicate more and more consequential decisions in our lives. It’s not just search engines either; it’s everything from online review systems to educational evaluations, the operation of markets to how political campaigns are run, and even how social services like welfare and public safety are managed. Algorithms, driven by vast troves of data, are the new power brokers in society.

(Image of Wolfram’s chart based on data from his digital pedometer via Stephen Wolfram)