A reader writes:

I’m disappointed that you only put up the numbers from accidental gun deaths. It seems a bit disingenuous, as the number of non-accidental car deaths, pool deaths, etc., are, of course, dramatically lower. In 2010 the FBI recorded 12,996 homicides. Of those, 8,775 were committed with guns. That compares to 1,704 with knives, the next closest, 540 with blunt objects, and 11 with poison. Even if you would argue that, of those killed by guns, many would have been killed with another

weapon, it’s hard to see how that would directly play out. How many drive-by knifings can you have? How many people can get hit by crossfire from a baseball bat?

How about suicide? In 2010, we had 19,392 gun suicides. Not so many with cars. And for those who would argue that guns don’t matter when it comes to suicide (i.e. people will kill themselves regardless of what tools they have to accomplish the deed), multiple studies have proven that access to guns dramatically raises the risk of a successful suicide attempt.

But if you want to stick with just accidental deaths, as you’ve done, let’s contextualize it a bit. From 2005-2010, almost 3,800 people in the US died from unintentional shootings. 1,300 of those were under the age of 25. 31% of those shootings could have been prevented by the addition of two devices: a child-proof safety lock and a loading indicator. And 8% of those shootings (that’s 304) were carried out by shots fired from children under the age of six. How many accidental road deaths are caused by drivers who are under the age of six?

So, yes, lots of stuff can kill you. No surprise. But in the US, we’re at a much higher risk of death by firearm because of the lobbying efforts of the industry whose product is design to kill.

Another reader, from the other side of the debate, quotes Waldman:

On one hand, there are over 300 million of us, so only one in 500,000 Americans is killed every year because his knumbskull cousin said “Hey Bert, is this thing loaded?” before pulling the trigger. You can see that as a small number. The other way to look at is that each and every day, an American or two loses his or her life this way. In countries with sane gun laws, that 606 number is somewhere closer to zero.

That sentence encapsulates what I hate about the anti-gun crowd.

While Waldman is ahead of the game in that he at least admits that at .5% of all accidental deaths make accidental gun deaths a pretty low priority, he goes on to say that we should eliminate all personal gun ownership to take care of it anyway. Why does this bother me? Well, because it says that he doesn’t value my desire to own a gun to the point where he would take my gun to solve a problem he just admitted was insignificant. So by extension, what I want is even less significant than this insignificant issue.

Look, as a responsible gun owner I want to reduce the number of gun deaths, and there are many ways of doing this, from requiring guns to be locked up when not in use so that minors cannot accidentally shoot somebody, to universal background checks to at least make it difficult for criminals to get their hands on guns. The problem is that it is difficult to work with somebody who puts such a low value on something that you value that they see no reason why anybody would even want what you want.

If you want to know why it is so easy for the NRA to sell the idea that some people want to take your guns away look no farther than Paul Waldman (and Obama, Bloomberg, Feinstein and others) who on one hand say they don’t want to take your guns while making statements that make it clear they don’t value you having one.

Update from a reader:

Your reader wrote, “While Waldman is ahead of the game in that he at least admits that at .5% of all accidental deaths make accidental gun deaths a pretty low priority, he goes on to say that we should eliminate all personal gun ownership to take care of it anyway.” I read the Waldman atricle you posted, and it mentions no such thing. I started reading other articles Mr. Waldman has written and it’s clear he favors more gun control laws (expanded background checks, limits on amounts of ammunition which can be purchased, are two examples I found), but nowhere have I seen a claim to eliminate all personal gun ownership – and he certainly doesn’t “go on to say” that in the linked article.

In the article he does mention other countries with “sane gun laws.” Few countries totally ban the ownership of guns. There is some chance that Mr. Waldman is speaking of Japan, which does come close to forbidding ownership, but, for example, most of Europe allows private gun ownership. It’s really hard to conclude that elimination of all gun rights is what Mr. Waldman means by the phrase.

Your reader seems to equate any talk of “sane gun laws” with a prohibition of ownership, but goes on to advocate for laws such as “requiring guns to be locked up when not in use so that minors cannot accidentally shoot somebody, to universal background checks to at least make it difficult for criminals to get their hands on guns” which are actually to the left of the Toomey-Manchin bill, which the NRA fought so hard against. The reader seems to have more in common with Mr. Waldman then the NRA, but sees his ally as the enemy.

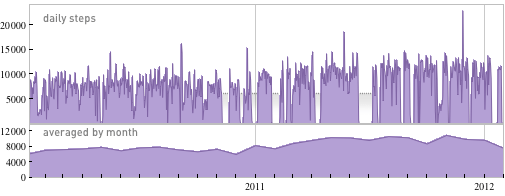

(Chart based on data compiled by the Centers for Disease Control and Prevention, via Leon Neyfakh)